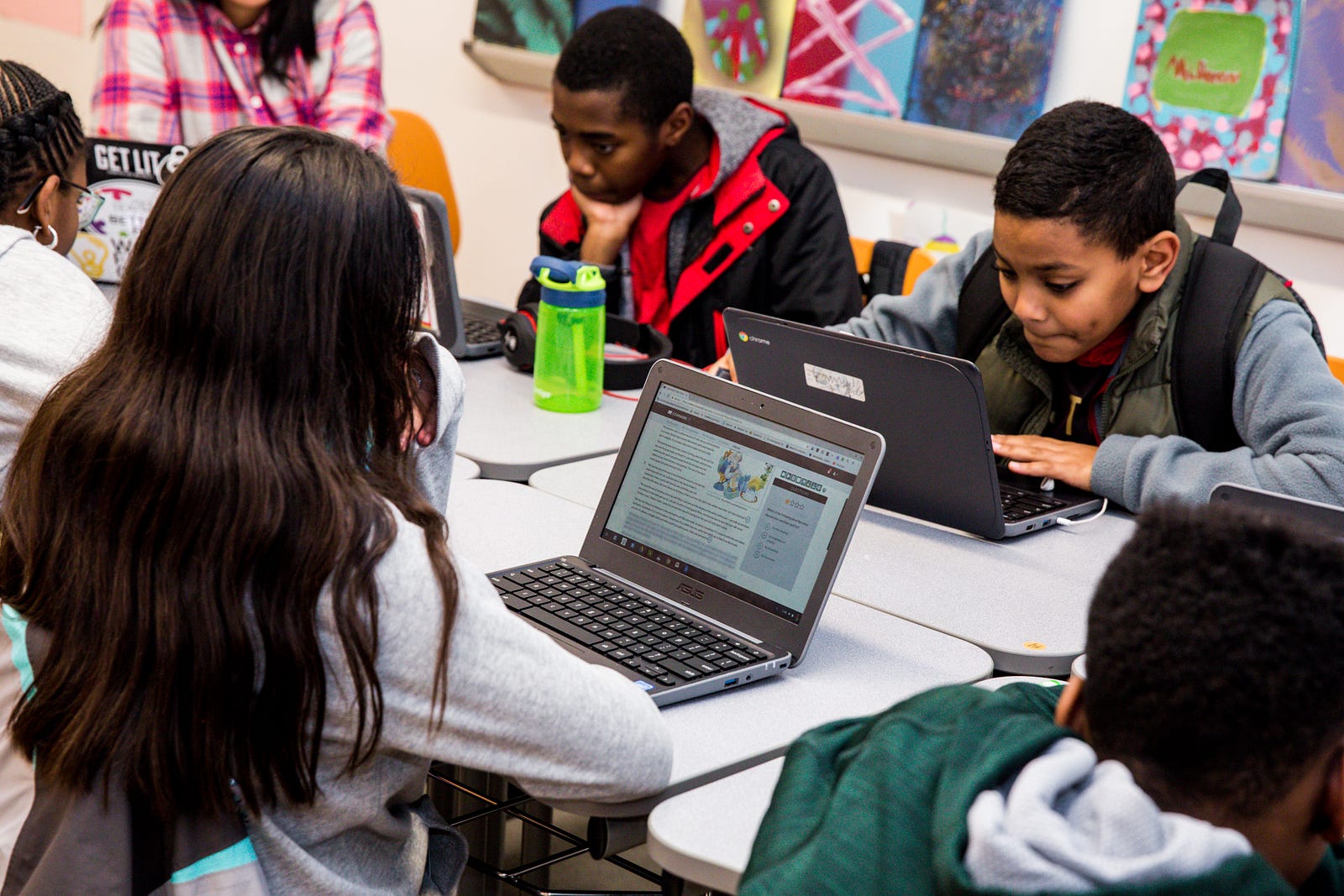

Our Commitment to Research

At CommonLit, we believe that it is our responsibility to add to the learning and education research fields, and involve stakeholders like teachers and students directly in this process whenever possible. Internally, our cross-disciplinary Cognitive Science Reading Group translates cutting edge research into practical applications across our platform. Through the support of Schmidt Futures, we were also recently given the opportunity to work with external research partners at Georgia State University’s (GSU) Departments of Applied Linguistics and Learning Sciences. With Professor Scott Crossley’s research team at GSU, CommonLit is helping to spur the development of new, advanced, open-source algorithms to measure the readability of educational texts.

As this project moves into its final phase, we are thrilled to share an update of our work over the last year, and invite open source developers to participate in the culminating competition. Our hope is that the new, free, and open resource derived from this project will drive the field forward at a time when equitable access to innovative new tools is sorely needed.

Project Background

It goes without saying that reading is a critical skill for academic success. What students are assigned to read matters a lot. The best practice is to select texts that are worthy of students’ time and attention, and appropriate for their reading level. One important tool that the education field has relied on to facilitate this practice are readability formulas, or formulas that assess the difficulty of texts.

Today, the field heavily relies on two types of readability formulas: traditional readability formulas like Flesch-Kincaid Grade Level (FKGL) or commercially available formulas. However, both have challenges. Traditional readability formulas lack construct and theoretical validity because they are based on weak proxies of text decoding and syntactic complexity, and ignore many text features that are important components of reading models including text cohesion and semantics. Moreover, many traditional readability formulas were normed using readers from specific age groups on small corpora of texts taken from specific domains. Commercially available formulas often lack suitable validation studies and suffer from transparency issues. Finally, access to the commercial formulas is cost-prohibitive for many schools, small publishers, and education technology organizations. In short, while readability formulas are omnipresent, they have limited independent evidence of success and are not all freely available.

Progress to Date

Over the past year, CommonLit and GSU have made tremendous progress. First, the CommonLit team assembled a corpus of thousands of authentic reading passages appropriate for educational use, from a wide variety of domains. Because the texts were selected with classroom use in mind, the resulting algorithm will be trained to be attuned to the specific language quirks and complexities of an educational context. Next, the GSU team ran numerous analyses to ensure a balanced and representative data set. Finally, we reached out to our community of teachers at CommonLit, and thousands volunteered to help rank the complexity of the texts through a process called pairwise comparison. (Thank you to our teachers for contributing to this research!) After extensive data cleanup and analysis at GSU and CommonLit, we are now armed with everything we need to launch the final phase of the project: The Readability Prize.

The Final Competition

In May, CommonLit will launch a three month competition on Kaggle, and invite data scientists and machine learning experts from around the world to create a robust new algorithm to measure the readability of educational texts. With $60,000 in prizes available, we expect thousands of entries from the best minds in data analytics, learning sciences, linguistics, and natural language processing.

Be sure to sign up for CommonLit’s newsletter and check the Kaggle site to stay up to date on competition details. We look forward to sharing the results of the competition — including exciting next steps for the practical application of the winning algorithm — this fall.